The Heritage and Legacy of M (MUMPS) – and the Future of YottaDB

K.S. Bhaskar

Legacy and Heritage

In computing, the term legacy system has come to mean an application or a technology originally crafted decades ago, one important to the success of an enterprise, and which at least some people consider obsolete. 1

But age alone does not make something obsolete – we still read and appreciate Shakespeare a half-millenium after his death, and paper clips from over 100 years ago are still familiar to us today, We must recognize that software is also part of our technical and cultural heritage (see Software Heritage).

As in much else in our daily lives, legacy and heritage are intertwined.

The Heritage of MUMPS

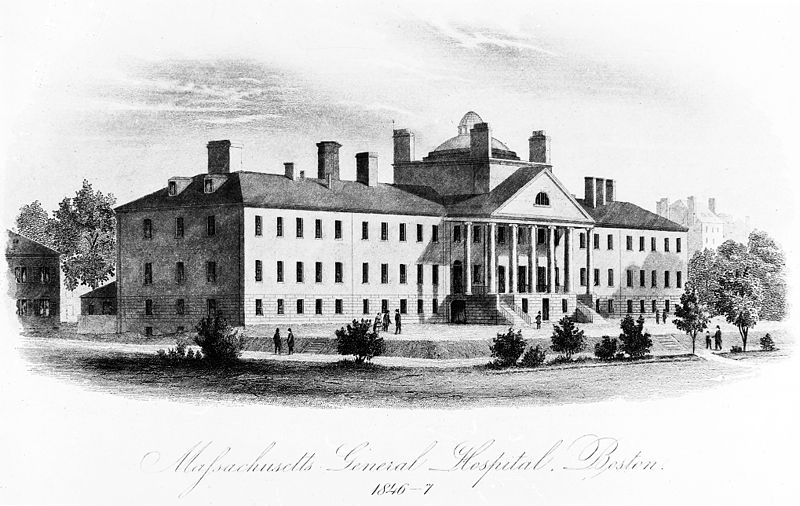

If an application needed a database In the late 1960s, it typically used IMS, on a mainframe. IMS was capable, but expensive to use. It was also a time when the minicomputer industry was booming, especially in the technology belt around Boston. Researchers at Massachusetts General Hospital in Boston, could afford minicomputers, and, aided by talent from the Massachusetts Institute of Technology just across the Charles River, created MUMPS – Massachusetts General Hospital Utility Multi-Programming System.

The key feature of MUMPS was hand-in-glove integration between a line-oriented, third-generation, procedural language (superficially not unlike Basic) and a schema-less hierarchical, sparse, associative memory, key-value database – the original NoSQL database, although the term had not been invented then. The fact that a database access was syntactically just an array reference made programmers exceptionally productive. MUMPS included features that are commonplace today, but ahead of its time, including interactive, multi-user computing, and just-in-time compilation with dynamic linking.

Its robustness, low cost, programmer productivity, and ease of use made MUMPS a de facto standard for clinical and life-sciences computing. The largest and most successful electronic health record systems are written in MUMPS, or derivatives of MUMPS, including a nation-scale EHR deployment.

The GT.M implementation of MUMPS extended the innovation, bridging the gap between MUMPS and traditional programming languages by compiling source code to object code in a standard OpenVMS object format that could be linked with code written in Pascal, C, etc., and on UNIX/Linux, the first MUMPS implementation whose object code could be placed in shared libraries with the standard ld utility, It pioneered logical multi-site application configurations unconstrained by geography many years before major brand-name databases did.

With secure, robust, highly-performant optimistic concurrency control based transaction processing, the Profile/GT.M application set records such as the first real-time core-banking application to break through 1,000 online transactions per second and the world’s first core banking use of Linux.

The Legacy of MUMPS

The legacy of MUMPS is a direct consequence of its heritage, and in particular derives from its success.

- To provide dynamic program execution in computers with very limited memory, early MUMPS implementations directly interpreted source code, as shells do today.. For efficient execution, this resulted in single character abbreviations for commands. e.g., “s” instead of “set” and a cultural preference for short variable names. Combined with the line=oriented nature of the language, this meant that program lines were dense and information-rich, compared with code in its peers such as C. Dense programs were an asset when the programmer’s interface to the computer was an actual terminal.2

- The complexity of major applications necessarily mirrors the business complexity of the large enterprises they support, Furthermore, for real-time, patient-centric healthcare applications, application schema complexity necessarily mirrors that of the human body. Medical care is one of the most complex arenas of human endeavor; banking and financial services, the other major area of MUMPS’ success, is not far behind.

- The success of MUMPS means that MUMPS application code is long-lived. Twenty-first century applications can include code from the 1970s, written to different standards. Even if it has evolved since, it is not inconceivable for a young programmer today to encounter code originally developed by a grandparent.

Consequently, for novice programmers, mastery of an enterprise-scale MUMPS application comes slowly, and it is easy to conflate application complexity with the programming language. “A Case of the MUMPS” is a rant by a programmer who struggles with the learning curve but raises the same issues more expressively than I do.

By way of analogy, the narrow streets of historical cities can be challenging to drive through, even as their continued existence speaks to the very success of those cities. Especially in a historical city, one cannot start excavating for a new development before first accounting for how it will affect the city’s existing structure. MUMPS applications have much in common with cities like London, Paris, Rome, and Athens in that their continued relevance speaks to their success, even as their history complicates their ongoing evolution.

As a further complication, owing to a series of unfortunate events, one MUMPS vendor was able to acquire all other major MUMPS implementations bar GT.M. Thereafter, it dropped the name MUMPS and any pretense of participating in standards. In a strategy that built an enclosure with high walls around its customers, it evolved from MUMPS a language as its own proprietary product. Since the world will not adopt a non-standard, proprietary language that is easy to get into but hard to get out of, this relegated their proprietary language to the evolutionary backwaters of programming languages. In turn, this slowed the development of MUMPS, which did not acquire some features of programming languages such as information encapsulation.

The Way Forward for YottaDB

YottaDB is a proven, rock solid, lightning fast, and secure NoSQL database for all programmers, whether or not they are using MUMPS.

For those already developing in MUMPS or considering MUMPS: since YottaDB is built on the GT,M code base and written to ensure backwards compatibility, YottaDB is, and will continue to be, an implementation of MUMPS, and a drop-in replacement for GT.M.

For programmers in other languages: effective r1,20, YottaDB exposes a C API. As C is the lingua franca of programming languages in that code in virtually any language can call a C API, this makes the YottaDB database accessible to all programmers. In the future, we anticipate creating standard, language-specific wrappers for other popular languages so that programmers in those languages do not need to code or maintain a call to a C API.

If enterprise scale applications are like cities, YottaDB is their infrastructure, proven today through years of use, and innovating and evolving for tomorrow. Whether you seek to replace an existing NoSQL database in an application, whether you seek to extend an existing application – for example, by building solutions for business intelligence, data warehousing, or machine intelligence to augment it – or whether you are building a new Internet of Things application stack, YottaDB is a proven solution for all your needs.

[1]. Of course, it is a completely different discussion as to who considers something obsolete and why. It is quite likely that application vendors who wish to sell new applications, or consultants who wish to sell migration services, are the ones who describe applications as “legacy.”↩

[2]. Unlike a window that you can resize to see more information, and whose font you can shrink to suit your needs, a terminal had a predetermined fixed maximum number of lines and columns to act as a window into a program’s code. Also, communication between the terminal and the computer was over a serial connection that operated at a very small fraction of the data rate of today’s networks.↩

Published on February 14, 2018

![agracier - NO VIEWS [CC BY-SA 3.0 (https://creativecommons.org/licenses/by-sa/3.0)], via Wikimedia Commons](https://yottadb.com/wp-content/uploads/2018/02/340px-Side_Entrance_to_St_Pauls_Church_-_panoramio-170x300.jpg)