YottaDB r1.22 is a minor release, primarily intended to make available in a YottaDB release the enhancements and fixes in the upstream GT.M V6.3-004 release.

YottaDB r1.22 is built on (and except where explicitly noted, upward compatible with) both YottaDB r1.20 and GT.M V6.3-004.

Highlights of the release include:

- ydb_* prefixed environment variable equivalents to gtm* prefixed environment variables. The later continue to be supported, with the former taking precedence when both are defined.

- An additional option to limit the impact of MUPIP REORG on system IO throughput.

As with every YottaDB release, r1.22 includes a number of smaller enhancements in functionality and performance, as well as remediation of issues as detailed in the Release Notes.

See the Get Started page to use YottaDB.

Tarball Hashes

| sha256sum | File |

dab80777a1d4d5be52f7c5d0749d8701f383aa8601eb9037884b14512d2b854d |

yottadb_r122_linux_armv6l_pro.tgz |

62dce638fee3fbac38de2c0f495730ab52abd05bf622d6ab7c89c98718dd5185 |

yottadb_r122_linux_armv7l_pro.tgz |

7c187371924429e30d3254cd17fc1b9d9fb3ac3792254880bec250be728ef056 |

yottadb_r122_linux_x8664_pro.tgz |

ac4cc25b1d1de4e3571aa8a5b448a584931095f9e7f1a9208c034ecc42d6f7f5 |

yottadb_r122_rhel7_x8664_pro.tgz |

44678b658fe6d1396b02250c837826c97aaf2706d9963a70aac6d0ea78085c9b |

yottadb_r122_src.tgz |

YottaDB r1.20 is the most significant release to date from the YottaDB team. With the ability to call the data management engine directly from C, and eliminating the need for any application code to be written in M (by separating the M language from the database without compromising either in any way), YottaDB r1.20 represents a historic milestone for the YottaDB/GT.M code base.

YottaDB r1.20 is built on (and except where explicitly noted, upward compatible with) both YottaDB r1.10 and GT.M V6.3-003A.

Highlights of enhancements and fixes made by the YottaDB team include:

- A C API to call the database management engine directly. As C is the lingua franca of computer languages, this makes the engine accessible from other languages. In the future, we anticipate creating standard wrappers to the engine from other languages, and we invite members of the community to do so as well.

- An all-new manual, the Multi-Language Programmers Guide to access the C API.

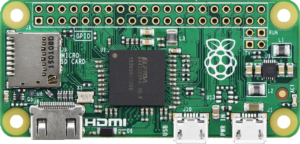

- At US $5 in retail quantities, a new Supported platform, the Raspberry Pi Zero is the lowest cost platform ever for the code base. This and the halving of the storage footprint of the database engine itself (14-15MB), makes YottaDB attractive for Internet of Things applications. Our blog post Edge Computing and YottaDB Everywhere touches on the benefits of YottaDB on low cost computing platforms.

- Docker containers make it easy to get up and running with YottaDB for experimentation, DevOps and Microservices.

Performance improvements (up to two orders of magnitude in specific test cases) in large local arrays and garbage collection, especially in applications with large numbers of strings that change often. - The READ command supports a buffer of the same number of prior inputs that direct mode does.

Highlights from the upstream V6.3-003A code base, thanks to the GT.M team include:

- Performance improvements when there are a large number of concurrent locks.

- Introduced as field test grade functionality in a production release, the ability for a process to associated itself with different instances at run-time.

As is the case in every release, YottaDB r1.20 brings other enhancements, e.g., callin when an application is already inside a transaction processing fence, and sending syslog messages to stderr when there is no syslog, as well as fixes to issues, all detailed in the Release Notes.

See our Get Started page to use YottaDB.

Tarball Hashes

| sha256sum | File |

cd26897549405b33e63966df52aefb8ad581afd1633db1cb2723ff2c12acce25 |

yottadb_r120_linux_armv6l_pro.tgz |

8993fbb7300cb732da06e90bc7cb1334e9ab5318da7d0b7427900be8919aa640 |

yottadb_r120_linux_armv7l_pro.tgz |

6e7bf4c1fa0b12e29fa2b0e1629bfdaaeebd0541c458eaf561d5676d1f0fc5e6 |

yottadb_r120_linux_x8664_pro.tgz |

e32dc5ffbdd1e8fd17d4ed2f1df97145f05d5748489f2b5d8322ad9ee33008ce |

yottadb_r120_rhel7_x8664_pro.tgz |

f4310725ff72ff6bd5da41fc0b3eaf5ab918978ce33d08878ed717c1d1cf04c4 |

yottadb_r120_src.tgz |

Bottom-Line Up Front – Computing on the Edge is Booming

Inexpensive System-on-a-Chip (SoC) computers are a game changer. With the BCM2835 chip, a Raspberry Pi Zero retails for $5, which means the SoC most likely costs less than $1 in bulk. That chip is roughly comparable to a 300MHz Pentium 2 CPU with the video capabilities of an original Xbox and is just one example of what is available in the market today. SoCs allow significant computing capabilities to be built into smart sensors and devices to enable ubiquitous computing.

Growth in computing driven by Moore’s Law since the 1960s resulted in waves back and forth between the cloud and the edge, with growth in one leading and producing complementary growth in the other. The current wave is on the edge, and still growing.

YottaDB powers computing on the edge too, not just in the cloud.1

Defining Terms

Let me define some terms as I use them:

- Computing is the process of calculating desired outputs from inputs. Inputs and outputs may be representational (such a news report or a paycheck stub), or connected to sensors and actuators (such as a building’s climate control system).

- Real-time computing is the process of calculating desired outputs from input in bounded time. For example, even if the route it recommends is less than optimal, a navigation app must respond in real-time, because there is no value in telling a driver, “Turn right ¼ mile back.”

- Computing on the edge is computing performed at or near the source of inputs and destination of outputs, typically not in a physically secured environment.2 “At or near” means that the time, cost, and robustness of moving data is assured. While a self-driving car can use the cloud to help it navigate, because the worst consequence is a sub-optimal route, edge computing must autonomously recognize and brake for a pedestrian in front.

The First Wave – in the Data Center

Through most of the 1960s, computing was centralized. The first computer I ever programmed, an IBM 1620, had its own room in an air-conditioned computer center.3 “Computing” at the edge was limited to analog computers, such as airplane autopilots. For anything more complex, data moved between where it was generated and consumed (the edge) to where it was computed (the data center) via Sneakernet in the form of punched cards, punched tape, and magnetic tape. Computing was most commonly “batch” processing, where one submitted a “job” on a deck of punched cards, and received the output hours, and occasionally days, later.

The Second Wave – Interactive / Personal Computing

Although the actual computing logic was still in the data center, the input could be produced, and output consumed, interactively on teletypewriters. Dropping costs and improvements in communication and hardware made computing economical outside the data center, in what we today call the edge.

Microprocessors like the Intel 8080 performed computation on the edge, originally in intelligent terminals. The growth of processing power and dropping prices allowed intelligent terminals to evolve into ever more capable personal computers. With the development of office suites, games, encyclopedias, and more, personal computing took off, leading to a boom in computing at the edge.

Change in the edge caused change in data centers, New online services in data centers allowed users in widely separated locations to interact in real time; a few, such as AOL and CompuServe, still exist today.

The Third Wave – from Data Center to Cloud

Pre-Internet online services were well tended, walled gardens organized to navigate within them, but you were out of luck if you needed something outside their guarded walls. In contrast, the Internet was more like a wide-open plain, driven by better, faster, and cheaper communication, organized around open communications protocols such as the Hypertext Transfer Protocol (HTTP) that allowed anyone to participate in the ecosystem. While you could get information you wanted on “the web”, locating it was not always straightforward, leading to the popularity of search engines and browsers.

The growth of the web meant that browsers became ubiquitous, as did web servers that fed them data. From providing roadmaps to information on the web, it was a logical progression to other web-based services such as e-mail. Instead of lugging a PC around with downloaded e-mail, it became so much easier to leave the e-mail with an online service so that you could access it from whatever device you had available. Collaboration became easier and more timely than ever before. I write these words on an online service provider4 rather than on an office suite on my laptop, making it that much easier and faster for my good friends and best critics – my editors – to prepare it for publication.

This also meant that IT departments no longer had to maintain infrastructure for commodity services but could rely on the economies of scale of cloud service providers. The cloud allows information to reside just about anywhere – Gandi, our web hosting provider, serves YottaDB web content to browsers around the world from a data center in Luxembourg.

While PC growth has stalled, and no one buys dedicated GPS units any more, the growth of the cloud was a beneficial catalyst for change at the edge. Technologies converged, and the basic cellphone morphed into a smartphone whose value as a platform for “apps” served by the cloud exceeds its value as a device for telephony.

Today’s Fourth Wave – Life on the Edge, or The Internet of Things

Compared to the IBM 7094 computers that controlled the Apollo lunar missions, a Raspberry Pi Zero has orders of magnitude more processing power, memory, and storage. Coupled with the growth in lightweight, inexpensive, low-power sensors, we are in the middle of an explosion of data generation on the edge.

Consider a pump. It should spin fast to maximize the flow of liquid, but not so fast that the flow past the blades is turbulent (causing a loss of pumping efficiency), or that little bubbles form resulting in cavitation damage. With an inexpensive resistance strain gauge on it, connected to inexpensive hardware like a Raspberry Pi Zero, using YottaDB to store the data, one can measure the vibration of the pump. Perform a Fast Fourier Transform (FFT) on the data, and when the pump is operating with laminar flow of the liquid past the blades, the FFT will have spikes at harmonics of the pump’s rotational speed with a certain baseline of noise between the spikes. As the flow past the blades becomes turbulent, that noise floor rises, and when cavitation starts to occur, it rises sharply. The software in the Raspberry Pi can control the speed of the pump to continuously operate it at maximum efficiency. This solution can both be built into new pumps as well as retrofitted to hundred year old pumps.

While such optimization has long been possible, it was economically viable only for large-scale industrial pumps such as those in an oil refinery. Today, they are economically viable for a broad range of applications, such as those one might find in light industry or agriculture. Such real-time performance tuning is widely applicable even for personal projects – for example, to allow a home theater system to adjust the sound to the presence of people (who change a room’s acoustics).

The explosion of data on the edge creates opportunities in the cloud, both for decision support / transaction processing as well as for machine learning. Data on the edge can be acted on in real-time at the edge, and also sent to the cloud for more intensive computation to improve the effectiveness of computing at the edge with better algorithms and heuristics. The following example is borderline fourth wave, because the smartphone is too expensive to be ubiquitous on the edge, but it illustrates the type of “Internet of Things” application stack that inexpensive, pervasive computing and sensors on the edge makes possible, and a use case that we routinely benefit from:

- A navigation app receives time and location information from satellites to compute its location. The map is a graph whose nodes are locations connected by weighted edges. Each edge has weights based on distance, transit time based on speed limit, transit time based on traffic, expected fuel consumption, etc. A graph traversal algorithm which has been instructed by the user on what it should optimize computes navigation instructions. This computation must be performed entirely at the edge to ensure timeliness of the result.

- The navigation app transmits data such as its location, heading, speed, and destination to the cloud. Decision support software in the cloud that receives this information from navigation apps in multiple vehicles calculates actual transit times for each edge, and updates this information to navigation apps that can then dynamically update the navigation instructions they generate. While the navigation apps must execute in real-time, the decision support software executes in near real-time – transit times based on traffic and weather delays are statistical aggregations over short periods of time rather than the real-time instructions from the navigation app to the user.

- If you are driving across a large city, current transit times based on traffic and weather for edges corresponding to locations which you may not pass through for a half hour are less important for calculating routes than estimates of what they are likely to be when you get there. This requires deeper information such as historical traffic patterns, local weather forecast, and knowledge about planned crowd-generating activities such as sporting events. Generating this type of projected information for navigation apps requires cloud-based machine learning to generate algorithms that can then make projections.

A fourth wave application stack based on our pump example above might look like this:

- In addition to the vibration sensor, each pump has an inexpensive input power sensor and output flow meter. The vibration sensor and software in each pump operate that pump at maximum efficiency, and the power sensor and output flow meter generate data that is collected by YottaDB and processed centrally (“in the cloud”). Also, the energy required to start the motor of each pump is easily computed at the edge from the energy consumption of multiple pumping cycles.

- If there is a pumping station with a reservoir of liquid and a set of pumps to pump it to the next stage, software in the pumping station can turn on and cycle pumps as needed, optimizing different parameters as directed, such as energy efficiency, pumping time, pumping deadline, volume required by consumers of the liquid pumped, etc.

- Machine learning can analyze pumping data as well as other information such as energy cost and pump failure data to make decisions to direct pumping operations, for example:

- When the sun shines, operate pumps for maximum pumping efficiency, e.g., because electricity comes from solar panels and has zero marginal cost.

- Otherwise, operate pumps for maximum energy efficiency, e.g., because without solar energy available the pumps must draw on battery power.

- If failure data is available, machine learning and statistical analysis in the cloud can also direct pump operation to minimize the probability of failure till the next maintenance period.

YottaDB Everywhere

On the Edge

YottaDB is very parsimonious of computing resources. The engine itself occupies just 10-12MB of space, even as it can operate databases that are limited only by available storage. Without a database daemon, it lends itself to low-power smart sensors and devices on the edge.

The robustness of YottaDB makes it a good candidate for devices that must operate autonomously. Databases can be configured to recover automatically after a power outage. Database replication means that the data can be streamed in real time to a hub or integration point, and indeed this allows for sensors and devices that have no persistent read-write storage – when such a device powers up, its database is restored from the replica on the hub or integration point.

YottaDB also gives you your choice of programming language. Effective r1.20, with its C API you can program in C, and since C is the lingua franca of computing, from any language with the ability to call C APIs. YottaDB also includes a complete implementation of M (also known as MUMPS), which was used to implement powerful software on computers far less capable than a Raspberry Pi Zero. The choice is yours.

In the Cloud

The following representative example illustrates YottaDB’s ability to handle very large databases. Consider a factory making equipment (such as vehicles) each of which has 100 (1E2) sensors. If the factory makes 100,000 (1E5) units per year, that is 10,000,000 (1E7) streams of data. If each sensor generates on average 1 byte/second during 1,000,000 (1E6) seconds of operation per year, that amounts to 10TB (1E13 bytes) per year, all of which can go into a single YottaDB database. If the factory has 10,000 (1E4) machines, each of which has 100 (1E2) sensors, that data can also go into the same database. With YottaDB everywhere, the data can be moved seamlessly and smoothly, under the direction of the design engineers, between the edge and the cloud. Warranty and service information can also go into the same database,

With statistical and machine learning techniques operating on the database, the manufacturer can generate predictive models of failure that use data streams from sensors at the edge to anticipate failures before they occur and to schedule maintenance before failures occur.

In Closing, Another Example

In addition to the discussion in this blog post, this hypothetical parking use case is an example that is representative of an Internet of Things application stack that spans the levels from sensors on the edge to machine learning in the cloud, discussing how YottaDB can provide data persistence at every level of the computing stack.

[1]. “Cloud” is just a recently invented term for the ability to remotely control computation environments.↩

[2]. Although some physical security can be implemented for edge computing devices, an attacker ultimately has physical access to edge computing devices.↩

[3]. On a personal note, that the computer center was the only air-conditioned building on campus in a city where summer temperatures routinely exceeded 100℉ hooked me onto computing.↩

[4]. A shout out to our provider Zoho, which provides a complete set of services for a small business like ours.↩

Featured Image : At electric driven pump of a water work nearby the Hengstey See, Germany, by Markus Schweisss

The Heritage and Legacy of M (MUMPS) – and the Future of YottaDBLegacy and Heritage

In computing, the term legacy system has come to mean an application or a technology originally crafted decades ago, one important to the success of an enterprise, and which at least some people consider obsolete. 1

But age alone does not make something obsolete – we still read and appreciate Shakespeare a half-millenium after his death, and paper clips from over 100 years ago are still familiar to us today, We must recognize that software is also part of our technical and cultural heritage (see Software Heritage).

As in much else in our daily lives, legacy and heritage are intertwined.

The Heritage of MUMPS

If an application needed a database In the late 1960s, it typically used IMS, on a mainframe. IMS was capable, but expensive to use. It was also a time when the minicomputer industry was booming, especially in the technology belt around Boston. Researchers at Massachusetts General Hospital in Boston, could afford minicomputers, and, aided by talent from the Massachusetts Institute of Technology just across the Charles River, created MUMPS – Massachusetts General Hospital Utility Multi-Programming System.

The key feature of MUMPS was hand-in-glove integration between a line-oriented, third-generation, procedural language (superficially not unlike Basic) and a schema-less hierarchical, sparse, associative memory, key-value database – the original NoSQL database, although the term had not been invented then. The fact that a database access was syntactically just an array reference made programmers exceptionally productive. MUMPS included features that are commonplace today, but ahead of its time, including interactive, multi-user computing, and just-in-time compilation with dynamic linking.

Its robustness, low cost, programmer productivity, and ease of use made MUMPS a de facto standard for clinical and life-sciences computing. The largest and most successful electronic health record systems are written in MUMPS, or derivatives of MUMPS, including a nation-scale EHR deployment.

The GT.M implementation of MUMPS extended the innovation, bridging the gap between MUMPS and traditional programming languages by compiling source code to object code in a standard OpenVMS object format that could be linked with code written in Pascal, C, etc., and on UNIX/Linux, the first MUMPS implementation whose object code could be placed in shared libraries with the standard ld utility, It pioneered logical multi-site application configurations unconstrained by geography many years before major brand-name databases did.

With secure, robust, highly-performant optimistic concurrency control based transaction processing, the Profile/GT.M application set records such as the first real-time core-banking application to break through 1,000 online transactions per second and the world’s first core banking use of Linux.

The Legacy of MUMPS

The legacy of MUMPS is a direct consequence of its heritage, and in particular derives from its success.

- To provide dynamic program execution in computers with very limited memory, early MUMPS implementations directly interpreted source code, as shells do today.. For efficient execution, this resulted in single character abbreviations for commands. e.g., “s” instead of “set” and a cultural preference for short variable names. Combined with the line=oriented nature of the language, this meant that program lines were dense and information-rich, compared with code in its peers such as C. Dense programs were an asset when the programmer’s interface to the computer was an actual terminal.2

- The complexity of major applications necessarily mirrors the business complexity of the large enterprises they support, Furthermore, for real-time, patient-centric healthcare applications, application schema complexity necessarily mirrors that of the human body. Medical care is one of the most complex arenas of human endeavor; banking and financial services, the other major area of MUMPS’ success, is not far behind.

- The success of MUMPS means that MUMPS application code is long-lived. Twenty-first century applications can include code from the 1970s, written to different standards. Even if it has evolved since, it is not inconceivable for a young programmer today to encounter code originally developed by a grandparent.

Consequently, for novice programmers, mastery of an enterprise-scale MUMPS application comes slowly, and it is easy to conflate application complexity with the programming language. “A Case of the MUMPS” is a rant by a programmer who struggles with the learning curve but raises the same issues more expressively than I do.

By way of analogy, the narrow streets of historical cities can be challenging to drive through, even as their continued existence speaks to the very success of those cities. Especially in a historical city, one cannot start excavating for a new development before first accounting for how it will affect the city’s existing structure. MUMPS applications have much in common with cities like London, Paris, Rome, and Athens in that their continued relevance speaks to their success, even as their history complicates their ongoing evolution.

As a further complication, owing to a series of unfortunate events, one MUMPS vendor was able to acquire all other major MUMPS implementations bar GT.M. Thereafter, it dropped the name MUMPS and any pretense of participating in standards. In a strategy that built an enclosure with high walls around its customers, it evolved from MUMPS a language as its own proprietary product. Since the world will not adopt a non-standard, proprietary language that is easy to get into but hard to get out of, this relegated their proprietary language to the evolutionary backwaters of programming languages. In turn, this slowed the development of MUMPS, which did not acquire some features of programming languages such as information encapsulation.

The Way Forward for YottaDB

YottaDB is a proven, rock solid, lightning fast, and secure NoSQL database for all programmers, whether or not they are using MUMPS.

For those already developing in MUMPS or considering MUMPS: since YottaDB is built on the GT,M code base and written to ensure backwards compatibility, YottaDB is, and will continue to be, an implementation of MUMPS, and a drop-in replacement for GT.M.

For programmers in other languages: effective r1,20, YottaDB exposes a C API. As C is the lingua franca of programming languages in that code in virtually any language can call a C API, this makes the YottaDB database accessible to all programmers. In the future, we anticipate creating standard, language-specific wrappers for other popular languages so that programmers in those languages do not need to code or maintain a call to a C API.

If enterprise scale applications are like cities, YottaDB is their infrastructure, proven today through years of use, and innovating and evolving for tomorrow. Whether you seek to replace an existing NoSQL database in an application, whether you seek to extend an existing application – for example, by building solutions for business intelligence, data warehousing, or machine intelligence to augment it – or whether you are building a new Internet of Things application stack, YottaDB is a proven solution for all your needs.

[1]. Of course, it is a completely different discussion as to who considers something obsolete and why. It is quite likely that application vendors who wish to sell new applications, or consultants who wish to sell migration services, are the ones who describe applications as “legacy.”↩

[2]. Unlike a window that you can resize to see more information, and whose font you can shrink to suit your needs, a terminal had a predetermined fixed maximum number of lines and columns to act as a window into a program’s code. Also, communication between the terminal and the computer was over a serial connection that operated at a very small fraction of the data rate of today’s networks.↩

![agracier - NO VIEWS [CC BY-SA 3.0 (https://creativecommons.org/licenses/by-sa/3.0)], via Wikimedia Commons](https://yottadb.com/wp-content/uploads/2018/02/340px-Side_Entrance_to_St_Pauls_Church_-_panoramio-170x300.jpg)